Neural Network Tutorials - Herong's Tutorial Examples - v1.22, by Herong Yang

Impact of Neural Network Configuration

This section provides a tutorial example to demonstrate the impact of the neuron network configuration on the number of hidden layers and the number of neurons in each hidden layer. Less layers with more neurons per layer seems to be better than more layers with less neurons per layers.

When using a multi-layer neural network, we need to consider its configuration on the number of hidden layers and the number of neurons in each hidden layer. The most frequently asked question is: "Should I use more hidden layers or more neurons in each layer if the total number of neurons is fixed?".

Let's see if we can find the answer by solving the complex classification problem in Deep Playground.

1. Continue with the previous tutorial.

2. Set "Ratio of training to test data" to 90%.

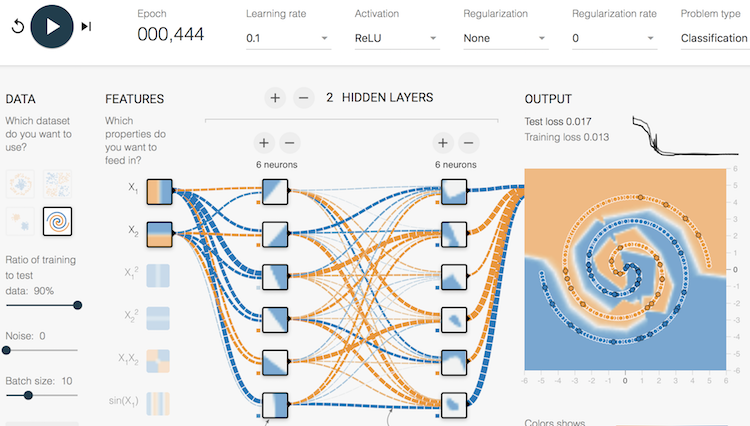

3. Change the hidden layer configuration to 2 layers with 6 neurons in each layer. The total number of neurons is 12.

4. Play the model. It should reach a good solution with no problem.

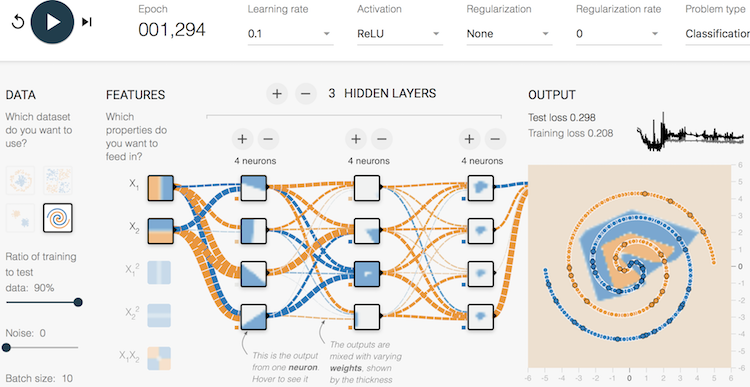

5. Change the hidden layer configuration to 3 layers with 4 neurons in each layer. The total number of neurons is 12, unchanged. Play the model again. It should reach a poor solution with a test loss of 0.298.

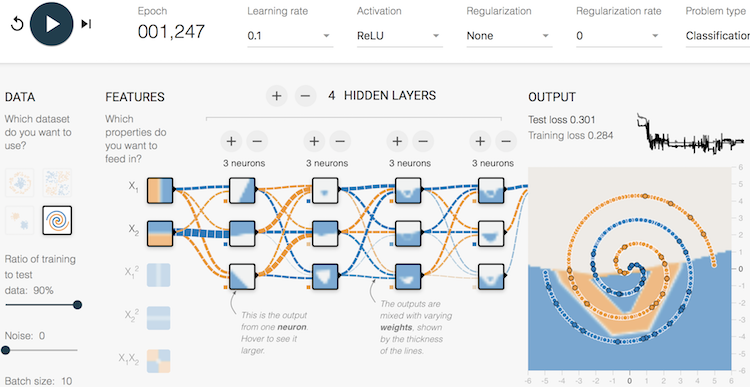

6. Change the hidden layer configuration to 4 layers with 3 neurons in each layer. The total number of neurons is 12, unchanged. Play the model again. It should reach a poor solution with a test loss of 0.301.

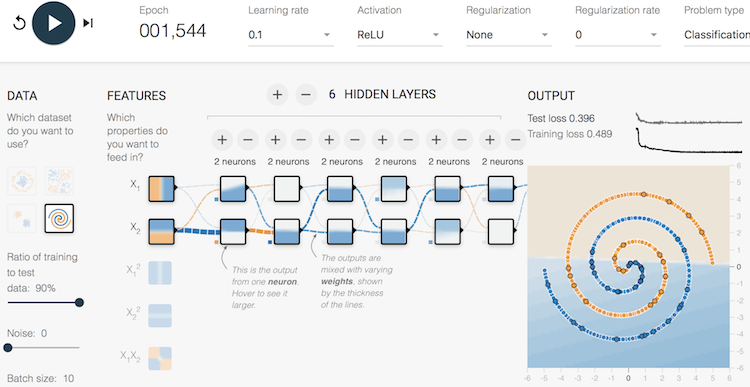

7. Change the hidden layer configuration to 6 layers with 2 neurons in each layer. The total number of neurons is 12, unchanged. Play the model again. It fails to reach any solution.

Conclusion, less layers with more neurons per layer seems to be better than more layers with less neurons per layers for a given total number of neurons.

Table of Contents

►Deep Playground for Classical Neural Networks

Impact of Extra Input Features

Impact of Additional Hidden Layers and Neurons

►Impact of Neural Network Configuration

Impact of Activation Functions

Building Neural Networks with Python

Simple Example of Neural Networks

TensorFlow - Machine Learning Platform

PyTorch - Machine Learning Platform

CNN (Convolutional Neural Network)

RNN (Recurrent Neural Network)

GAN (Generative Adversarial Network)