Neural Network Tutorials - Herong's Tutorial Examples - v1.22, by Herong Yang

Complex Model in Playground

This section provides a tutorial example on how to build a complex neural network model with Deep Playground to solve the classification problem on a complex sample dataset. Using 1 hidden layer with 8 neurons is not good enough to solve this complex problem.

After played enough with the simple classification problem with the Deep Playground, let's jump the complex problem.

1. Go to the Web based version of Deep Playground at https://playground.tensorflow.org.

2. Keep "Problem Type" as "Classification".

3. Select the fourth dataset pattern where two output groups form spiral lines circling each other. This represents the most complex learning task unless we reverse-engineer out the mathematical function that generates this sample dataset.

4. Change "Ratio of training to test data" to 90%. Only 10% of the data is left for testing.

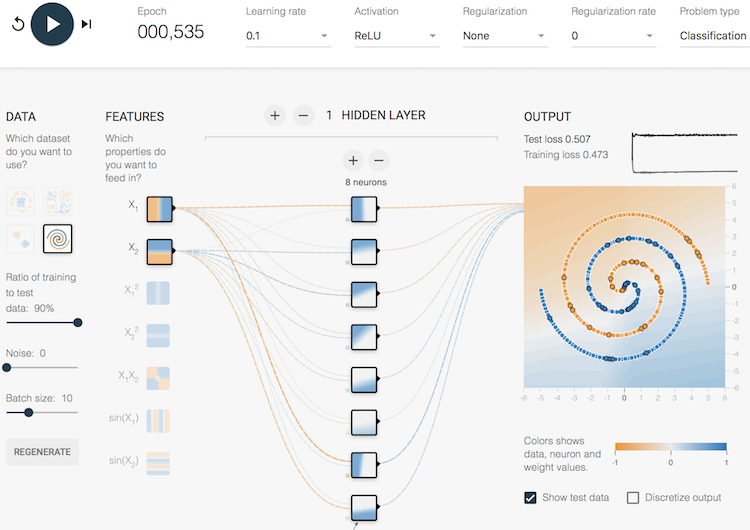

5. Add 1 hidden layer with 8 neurons, which is the maximum number of neurons supported in a single layer in Deep Playground.

6. Select ReLU (Rectified Linear Unit) function as the activation function.

7. Click "play" icon to watch the progress. Unfortunately, the model fails to converge to any solution. The training loss stays at the 0.47 level as shown below.

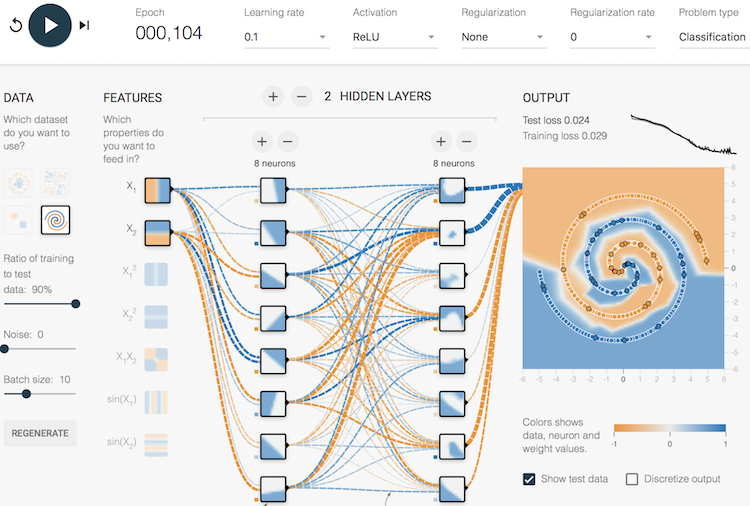

8. Add 1 more hidden layer with 8 neurons. And play it again. The model should reach a good solution in about 100 epochs. The training loss reaches the 0.029 level. The solution represented by the background color matches well with all samples as shown below.

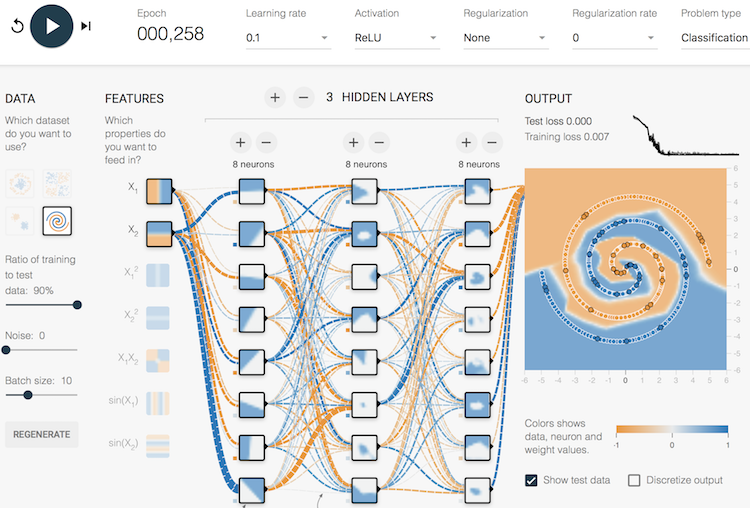

9. Let's try 3 hidden layers with 8 neurons in each layer. You should see a better solution as shown below.

Conclusion, we have to use at least 2 hidden layers with the ReLU activation function to solve this complex classification problem. Adding more hidden layers does not help very much on the accuracy of the solution.

Table of Contents

►Deep Playground for Classical Neural Networks

Impact of Extra Input Features

Impact of Additional Hidden Layers and Neurons

Impact of Neural Network Configuration

Impact of Activation Functions

Building Neural Networks with Python

Simple Example of Neural Networks

TensorFlow - Machine Learning Platform

PyTorch - Machine Learning Platform

CNN (Convolutional Neural Network)

RNN (Recurrent Neural Network)

GAN (Generative Adversarial Network)